Data Annotation in AI: The Foundation Most People Ignore

January 2026

AI, Data Annotation, Human in the Loop

January 2026

AI, Data Annotation, Human in the Loop

Behind every successful AI system lies a large volume of carefully annotated data. While algorithms and architectures often get the spotlight, data annotation is the silent foundation that determines whether an AI model performs well or fails in real-world scenarios.

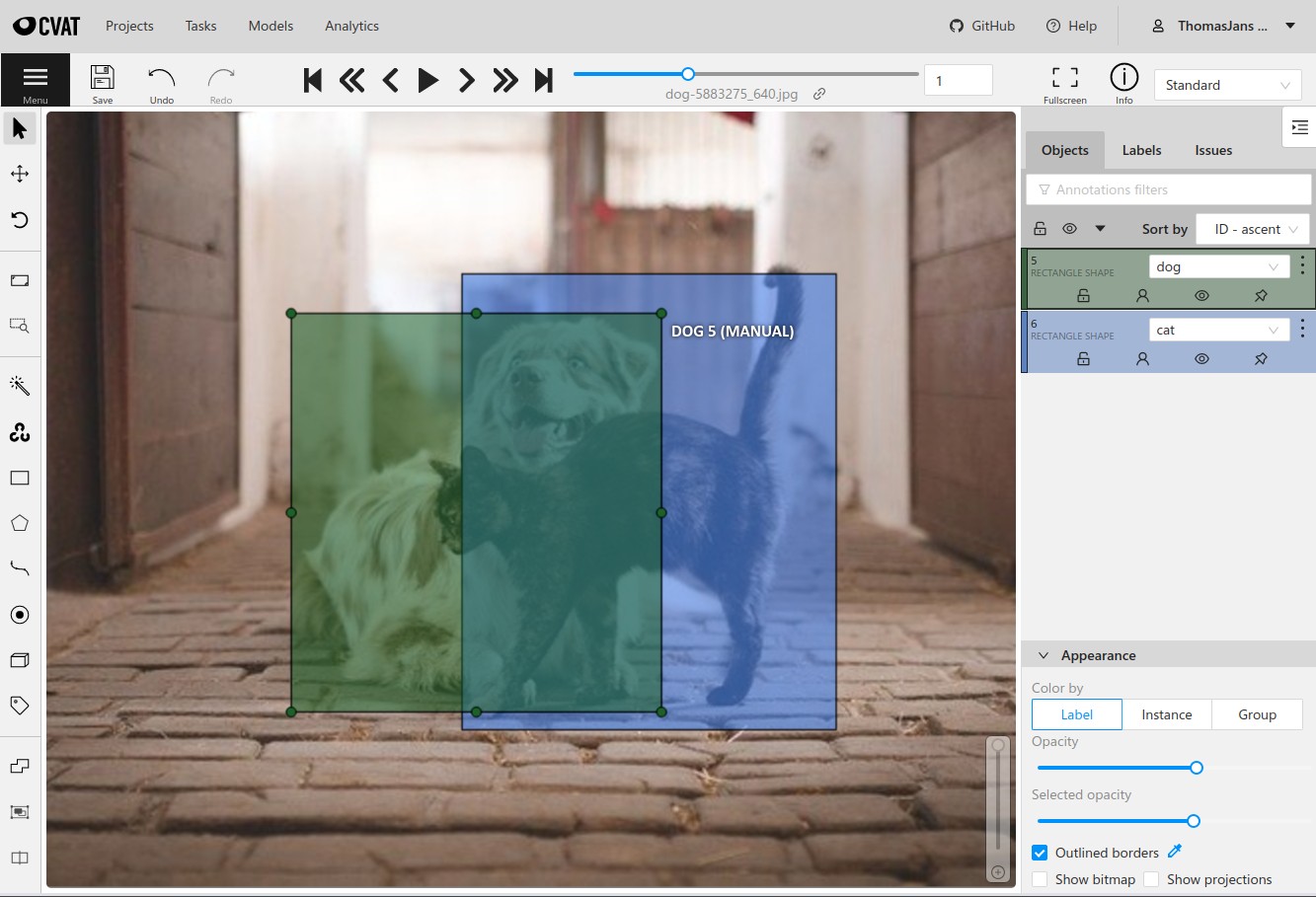

Data annotation is the process of labeling raw data such as images, videos, text, or audio so that machine learning models can learn patterns from it. These labels act as ground truth, guiding the model toward correct predictions. Poor annotations lead to poor learning, no matter how advanced the model architecture is.

In modern AI systems, annotation is no longer just about drawing bounding boxes or tagging objects. It involves contextual understanding, intent recognition, temporal reasoning, and even subjective judgment in areas like sentiment, aesthetics, or human behavior.

This is where the human-in-the-loop approach becomes critical. Skilled annotators, reviewers, and quality analysts ensure consistency, reduce ambiguity, and continuously refine guidelines. High-quality annotation workflows often include multiple review layers, feedback loops, and error analysis to keep model error rates low.

As AI moves toward more complex reasoning and multimodal understanding, data annotation is evolving into a specialized skill rather than a mechanical task. Teams that invest in annotation quality ultimately build models that generalize better, behave more responsibly, and perform reliably in production.